In Part 3, we described the procedure for using CloudWorks APIs.

But the main question would be, how can we use them? Indeed, we can find that having a dedicated screen in Anaplan itself is already a major step forward. And it surely addresses most of the future needs.

Nevertheless, in our strive to open our platform as much as possible, we wanted to provide an example of how to use those APIs in a practical situation.

Here's our business case for this practical example:

The business would like to have the import triggered following an ad-hoc event and not via a scheduler. In that case, there is no current way to do so with the CloudWorks native features. But thanks to the APIs, we will show here a way to trigger an import after uploading a file in an S3 bucket.

Before starting

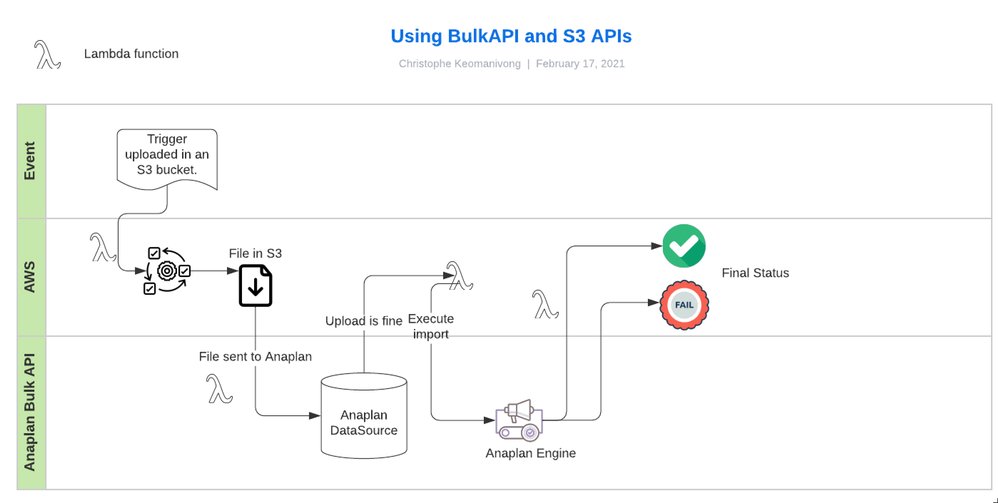

First, you might be wondering why those new APIs matter. This use case could indeed have been addressed by using Anaplan bulk APIs. But a quick look at the following schema will convince you of the reason why those new APIs are handy, at least for quick developments.

With the bulk APIs, the developer would have had to handle a lot more topics (Anaplan's API, AWS S3 APIs, and the links between those two platforms). Even though it can be done, it adds more complexity, and moreover, it adds more subjects that can potentially go wrong. Additional complexity is also to get the status at each different step, making this development closer to an actual software.

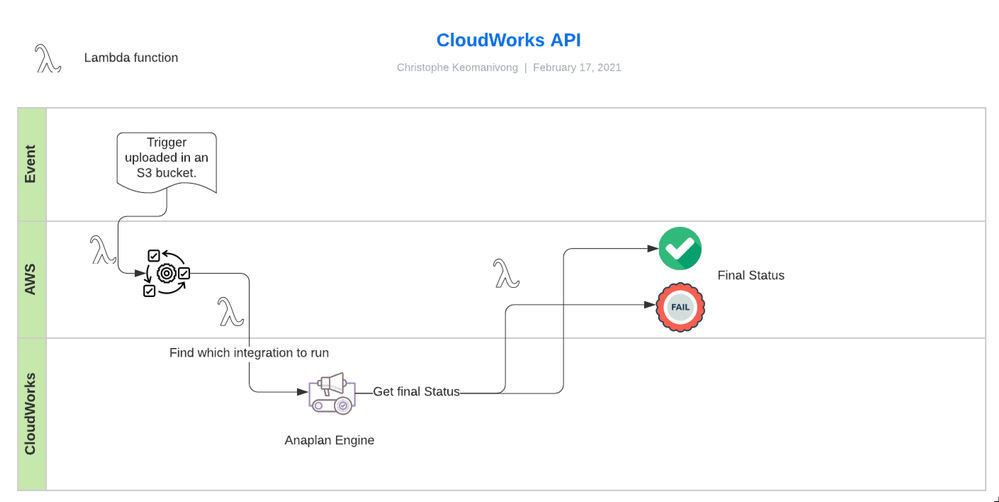

On the other hand, with CloudWorks APIs as shown above, the flow is more straightforward (the file, the bucket are already specified in the integration) and Anaplan will primarily support what will happen when the job will be executed.

In our example, we will trigger the integration after uploading a particular file in a dedicated bucket. This bucket will be a different one than the one set up in the CloudWork connection.

Note: We could also have used the same bucket. But that can also illustrate some options when IT would like to implement their own triggers.

Let's begin.

Step 1: Ensure all elements have been created

You will need to have set up a working integration in CloudWorks before starting.

This means:

- A working action (imports, exports or processes) in Anaplan

- A connection in CloudWorks

- A working integration using the latter in CloudWorks

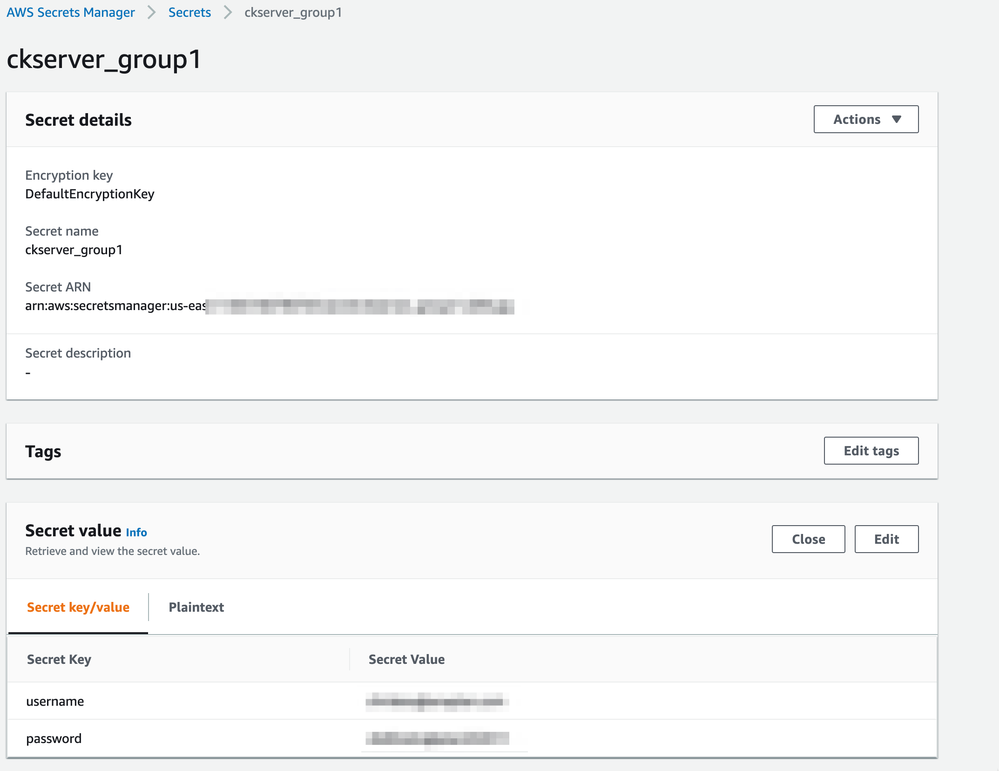

Step 2: Create secrets

We will store that information in that AWS keeper place and use them when authentication to Anaplan will be required.

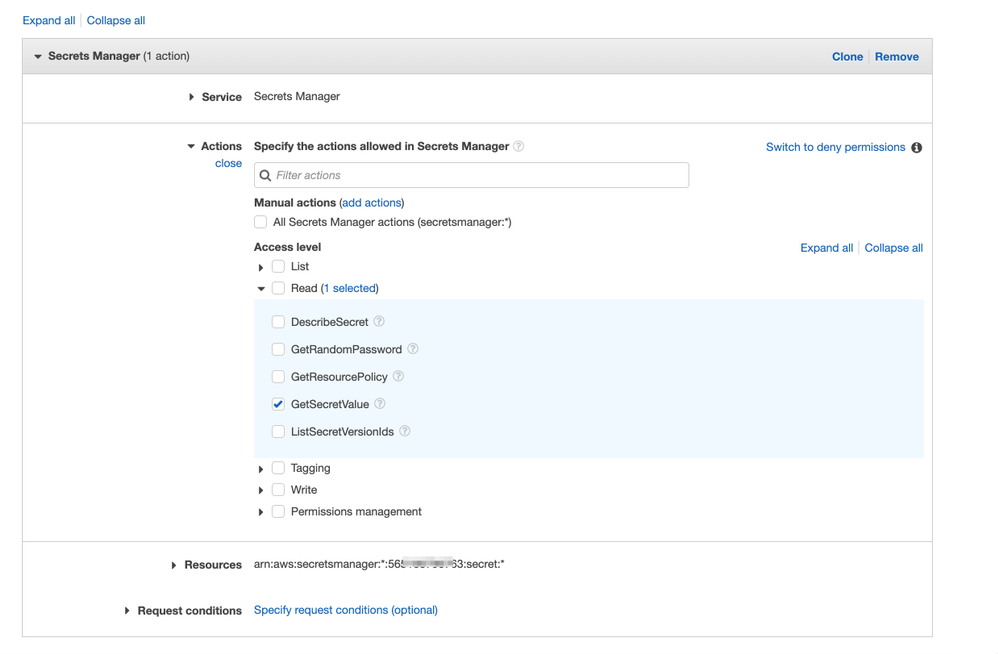

In IAM console, create a strategy that will be used to get the secret values.

Step 3: Create a bucket

We could use the same bucket as the one that was used in CloudWorks, but it's not mandatory.

Step 4: Create a Lambda function

With the code available here, we already prepared the minimum package to execute an integration.

This is the contents of the "LambdaPythonCodes.zip" file that you can download from that article.

- AnaplanAPI.py

- lambda_function.py

- RetrievalSecrets.py

In the python code called AnaplanAPI.py, there are two classes.

- AnaplanAuthHandler: In the code, we take care of the authentication part (basic authentication for now). We will mainly issue the tokenValue needed to perform subsequent APIs requests.

- AnaplanCloudWorks: In that code, we will have functions that will help to gather all information needed to trigger an integration based on the name of a particular integration.

"RetrievalSecrets.py" helps to get values from the secret created in Step 1.

"lambda_function.py" is the code that will proceed to the execution of the integration.

In that latter file, you can see that the integration name and the secrets details are hard-coded and they will need to be adapted to your situation:

Info: We created a "Layer" that encompasses the requests package. We could have used boto3 requests version but requests package looks to be more up-to-date.

In order to know how to create a layer, follow the instructions in this AWS page and use the "python.zip" file as such attached to that article.

Step 5: Set up the permissions

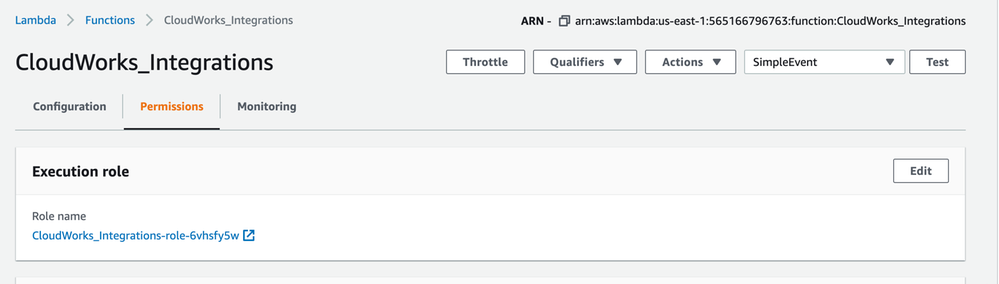

Click on "Permissions" and on the role name link.

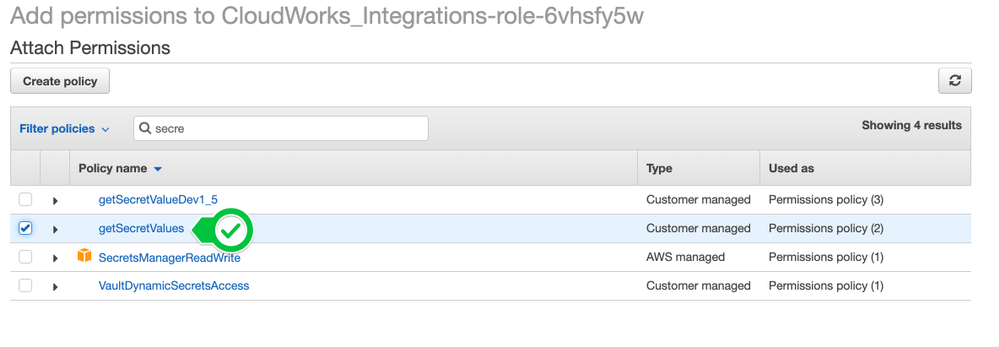

On the following screen, click on "Attach policies" and select the policy you've created in Step 2.

Now, click again on "Attach policies" and look for the secret policy you created in Step 1.

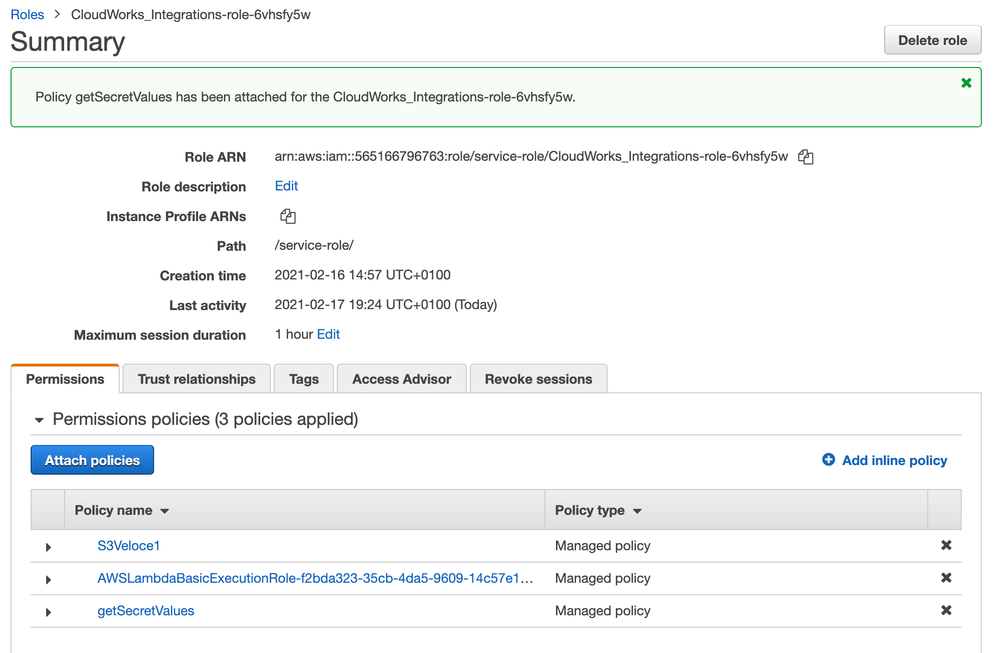

Now, you should have three lines:

Step 6: Test the lambda

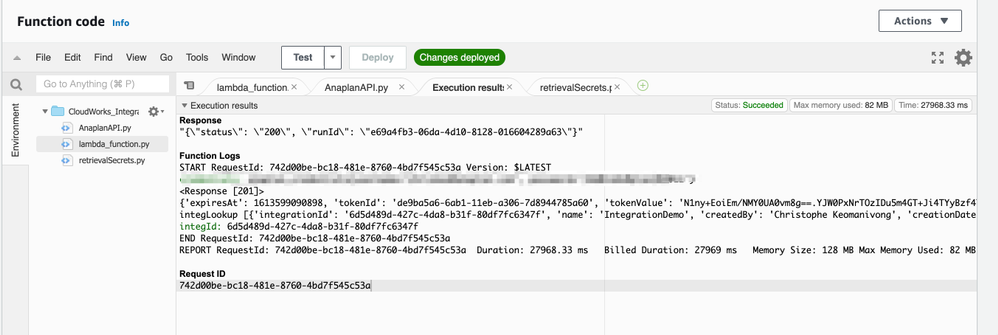

You can create a test event and check if the lambda already does what it is supposed to do, without the event.

Just click on Test, create a test event (without amending anything), and click on Save.

A successful test should be as followed:

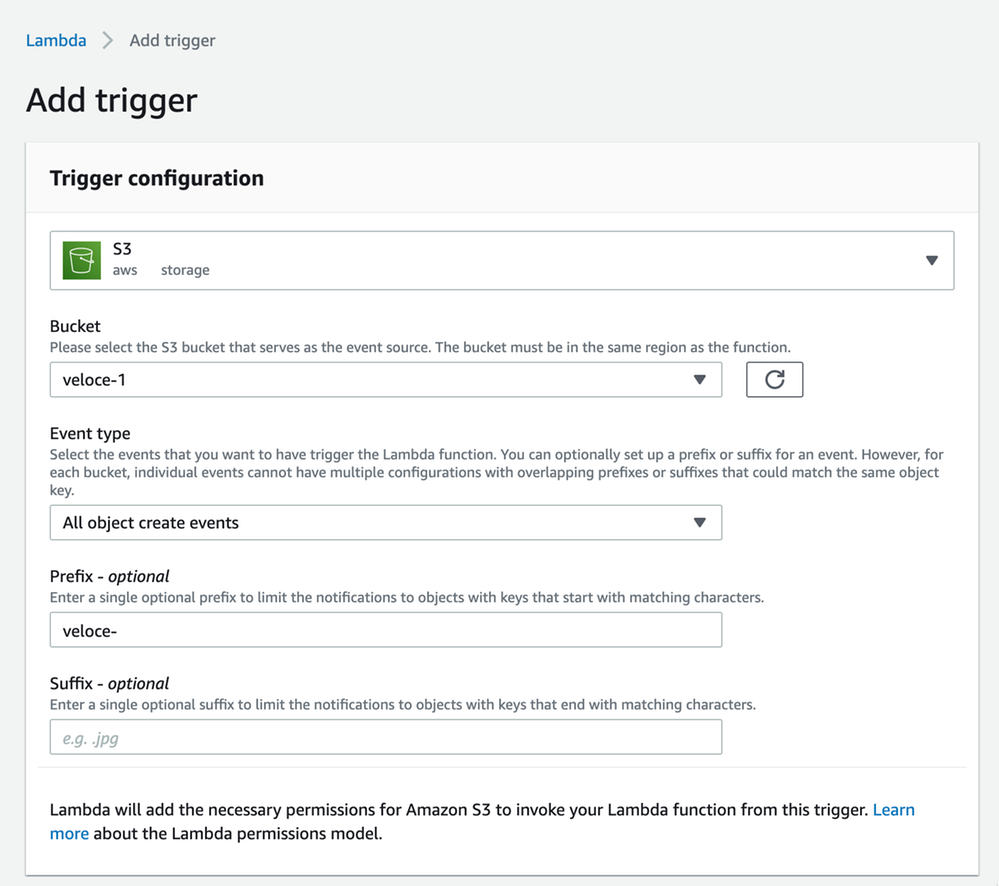

Step 7: Add the trigger event

The final step is to set up the trigger event, which is a simple upload of a file in a particular bucket. As we don't want to trigger an integration for every file that could be uploaded to the bucket, we will use a prefix.

To trigger the lambda, you'll need to upload a simple file with a prefix "veloce-".

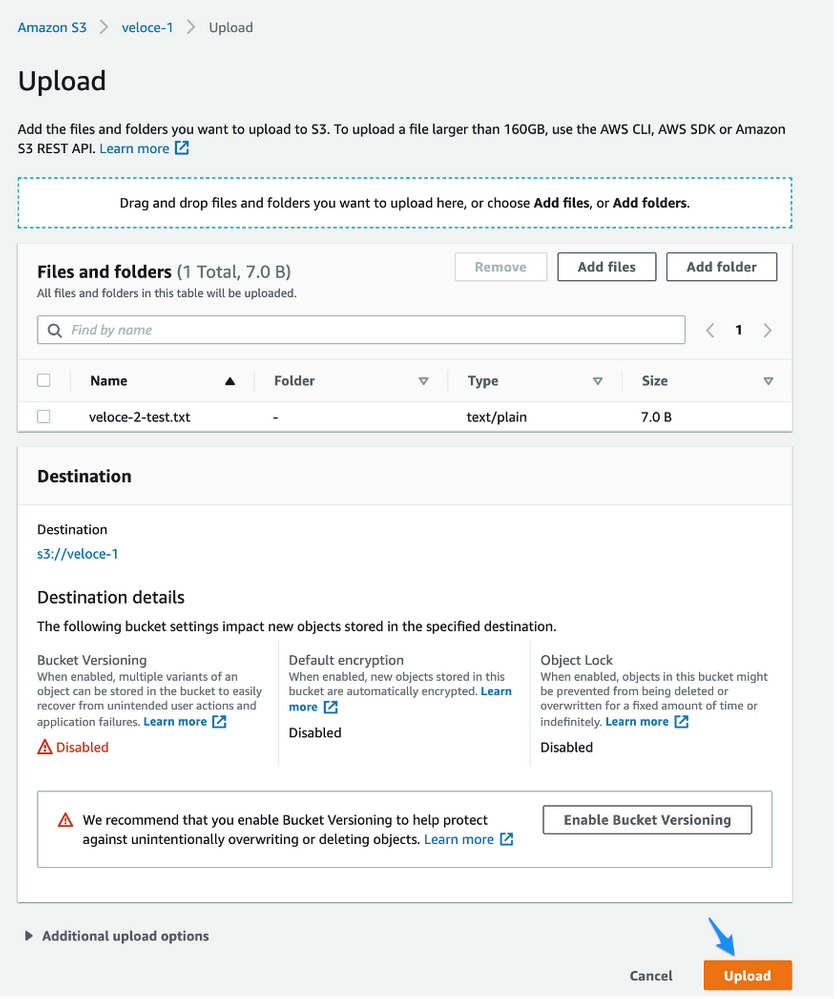

We can now test it by uploading such a file:

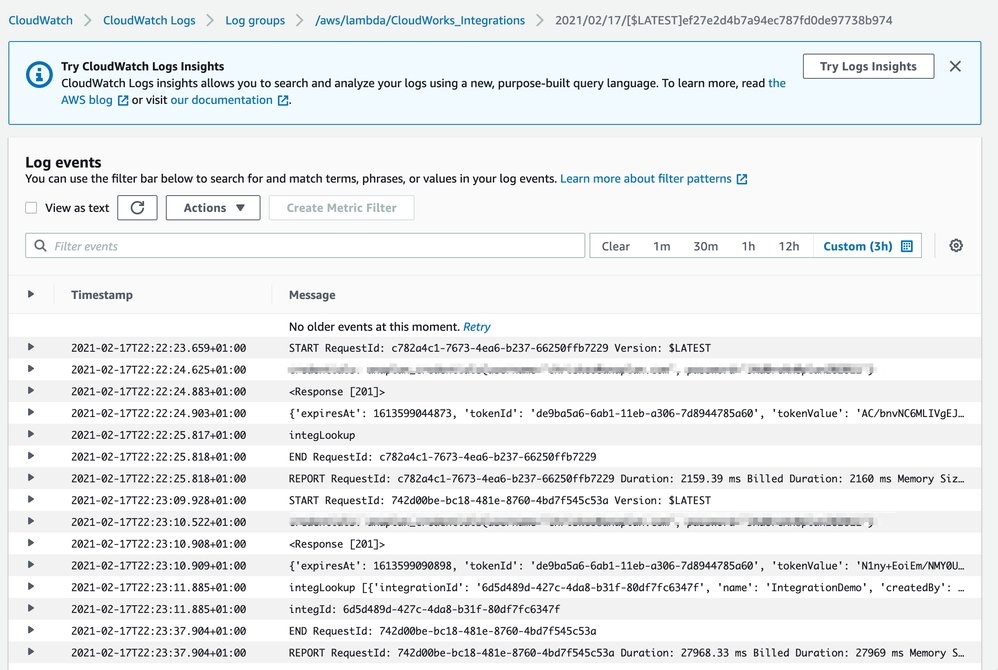

Go back to your lambda and if all is successful, you should see CloudWatch events similar to the following one:

You now have an integration from AWS S3 to Anaplan that can be conditionally triggered by an event!

The code used in that document is available (LambdaPythonCodes.zip); feel free to download it and use it.

Found this content useful? Got feedback? Let us know in the comments below!

Contributing authors: Pavan Marpaka, Scott Smith, and Christophe Keomanivong.